Install apache spark on vmware

To achieve this validation, run the vioperf utility on all the cluster nodes simultaneously to determine the maximum disk I/O performance that can be achieved on each VM during times of heavy I/O load. If you are providing storage using a shared storage device, make sure to validate disk I/O performance on the cluster as a whole to ensure that the shared resource(s) do not create a bottleneck.Vioperf utility to validate the actual performance throughput being achieved on each VM. Vertica recommends that customers use the A virtualized Vertica node requires the same amount of disk I/O performance as a non-virtualized one. Configure attached storage for high I/O performance.Vertica recommends that you investigate the options with your hypervisor vendor. For some hypervisors, different operating systems may perform better than others.

Many hypervisors allow you to take advantage of scaling out solutions by over-subscribing resources, for example, deploying more virtual CPUs than are physically installed in the host hardware.

Install apache spark on vmware how to#

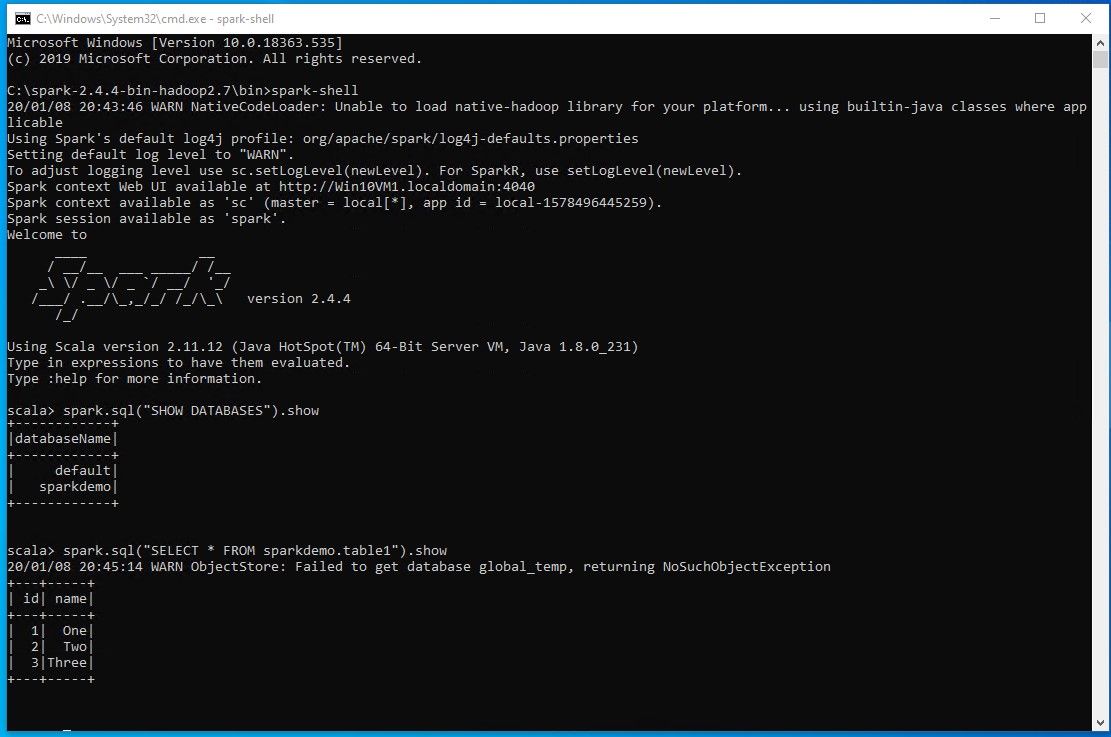

In summary, you have learned how to install Apache Spark on windows and run sample statements in spark-shell, and learned how to start spark web-UI and history server.

$SPARK_HOME/bin/spark-class.cmd .history.HistoryServerīy default History server listens at 18080 port and you can access it from browser using Spark History Serverīy clicking on each App ID, you will get the details of the application in Spark web UI. logDirectory file:///c:/logs/pathĪfter setting the above properties, start the history server by starting the below command. You can enable Spark to collect the logs by adding the below configs to nf file, conf file is located at %SPARK_HOME%/conf directory. History server keeps a log of all Spark applications you submit by spark-submit, spark-shell. On Spark Web UI, you can see how the operations are executed. Spark Hello World Example in IntelliJ IDEAĪpache Spark provides a suite of Web UIs (Jobs, Stages, Tasks, Storage, Environment, Executors, and SQL) to monitor the status of your Spark application, resource consumption of Spark cluster, and Spark configurations.You can continue following the below document to see how you can debug the logs using Spark Web UI and enable the Spark history server or follow the links as next steps This completes the installation of Apache Spark on Windows 7, 10, and any latest. Rdd: .RDD = ParallelCollectionRDD at parallelize at console:24 Spark-shell also creates a Spark context web UI and by default, it can access from On spark-shell command line, you can run any Spark statements like creating an RDD, getting Spark version e.t.c